Fixes for the “Broken” Nursing Home Star Ratings Part 1: Survey

04 Apr

It’s not a surprise to see Nursing Homes in the news, and it’s usually not to paint a sunny picture. Issues related to COVID-19 have understandably dominated much of the recent coverage, but a March article in the New York Times took aim at a different target – CMS’s Star Ratings system. Articles in the Times often generate a raft of responses from the industry, and this piece is no different, with responses ranging from point-by-point debate to a set of nuanced suggestions for a path forward. The chatter is timely, too, as a new administration has the opportunity to gather feedback and implement meaningful improvements. Since we spend most of our days analyzing Star Ratings data, we thought we’d seize on this moment of increased awareness to offer a few suggestions of how we think the Star Rating system could be improved.

This piece is the first in a four-part series where we focus on each of the three domains of the Five-Star System, with a final wrap up in the fourth installment. For today, we start with the Health Inspection / Survey domain, and our suggestions can be summarized in a few short points. Read on for more detail.

- Shorter, more frequent Standard Surveys with results published sooner

- Standardization among states in how surveys are conducted and deficiencies cited

- Simplification of survey cycles and deficiency points

The Gravity of the Survey

Survey is by far the most important of the three domains. The Overall Star Rating starts with the Survey domain and more often than not, that’s where it ends. The Staffing and Quality domains can only confer ‘bonus’ or ‘penalty’ stars, and as a result, about half of Nursing Homes nationwide have Overall Ratings that match their Survey Rating.

While the inclusion of Focused Infection Control Surveys into the Survey Rating has changed the landscape, deficiencies found during the annual Standard Survey still contribute the most towards a facility’s Survey Rating. Naturally, with one Survey representing such a large part of a home’s Overall Rating for the next three years, it’s no shock that homes would prefer not to be surprised by these critical events. Leaving aside the issue of staffing peaks – what the Times thinks is prior knowledge could also be explained by summoning on-call reinforcements after the inspectors arrive – it’s clear that putting so much weight on an annual event creates potentially perverse incentives. With Staffing and Quality both being updated quarterly, it seems almost counterintuitive that the most weighty element of the most weighty domain is only updated yearly at best. One solution is to reduce the weighting of Survey in the Overall Rating. We’ll explore that idea in part four of this series. But might it also make sense to increase the frequency of Standard Surveys?

Frequency

In a since-deleted blog post, former CMS Administrator Seema Verma suggested the opposite. She advocated instead for decreased frequency of inspections for top performing homes, with each set to receive a survey only every 30 months. We think this is misguided. Homes in the Special Focus Facility program are surveyed at least every six months, and we think that expanding this concept out to non-SFFs would make sense.

Operators themselves may not be thrilled with the prospect of more surveys, but at least they’d get another shot at it in six months instead of a year. Obviously sending out an army of surveyors costs money, so budget constraints are also an issue. To help with both, the Standard Survey could be broken up or subdivided in some way. The current 750-page manual covers a lot of ground and turns the event into a grueling marathon for all. If this is too far of a reach, an alternative to having Surveys arbitrarily every six months is to have them be triggered based on issues found in the facility’s MDS records. For example, a rash of falls or a spike in the number of residents on anti-psychotic medication is cause for concern and could merit the need for an additional inspection. At the suggestion of the CEO of a small chain of highly-regarded homes, we think it also makes sense for surveyors and MDS coordinators to be certified, and for MDSs to be audited more frequently. We’ll have more on this concept in our installment on the Quality domain. Either way, CMS should consider publishing Survey results faster, even before the Plan of Correction is submitted. The current delay of up to 4 months robs consumers of the timely data they need to make informed decisions.

We leave it to CMS to work out the details, but shorter and more frequent surveys would give homes more chances to work on their score, and it would provide consumers with better and more timely information. In some ways, CMS has already started down this path.

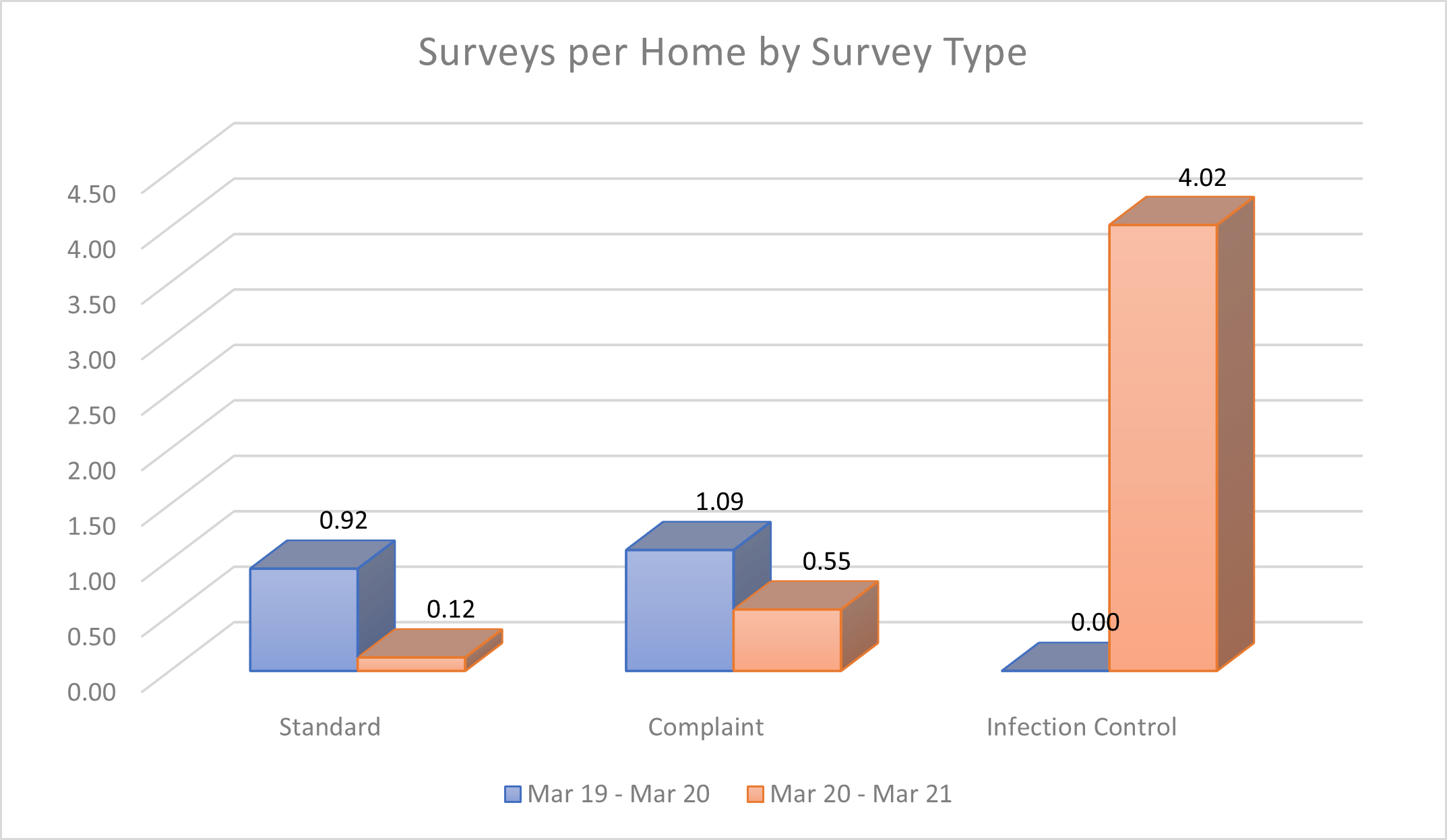

In the past year, surveyors managed to visit homes for a Focused Infection Control Survey at least 4 times each on average, and that number is most certainly missing some of the more recent Surveys. So if they can manage all that during a pandemic, we know that they can muster the troops to get into the buildings more often. We’d like to see them do just that.

State Variation

In another related blog post, former CMS Administrator Verma also pointed to the inconsistencies among State Survey Agencies (SSAs). This is, and has been, one of our biggest concerns about Survey. Consider these two facilities in the Memphis area.

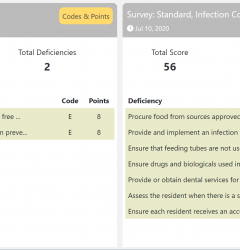

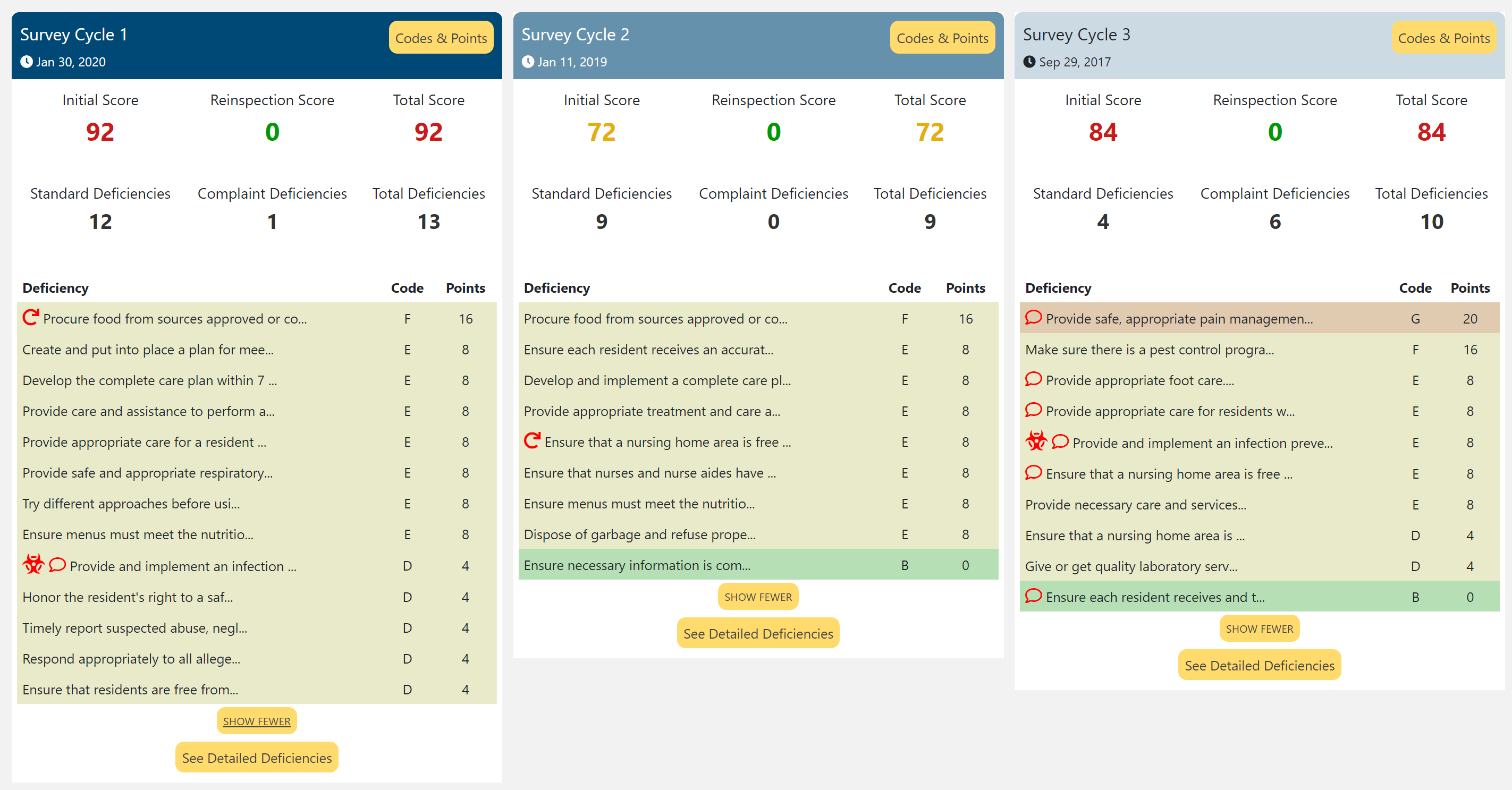

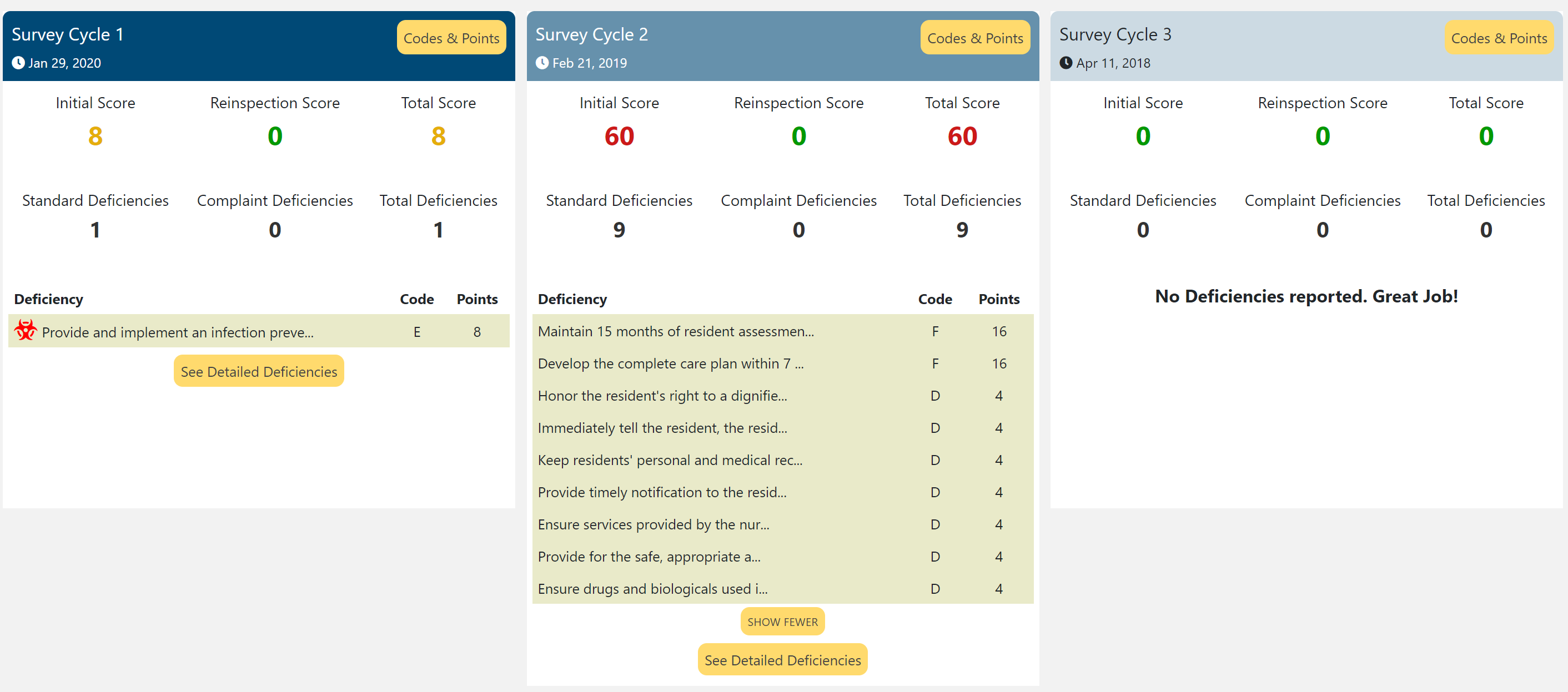

They’re only 10 miles apart, so if you’re looking for care, it’s reasonable to consider both. They’re both two stars in Survey, so if we do as CMS says and look more deeply into the ratings, what do we find?

West Memphis Wellness, LLC – West Memphis, AR

Regional One Health Subacute Care – Memphis, TN

Now we’re confused. It’s easy to say that you’d prefer Regional One, since they have fewer deficiencies and fewer points. But if West Memphis is really that bad, why do they both have a 2-star Survey Rating?

The answer lies in the puzzling variation in how the SSAs and individual surveyors interpret the rules. While every facility is compared only against their state for the purposes of the Survey Rating, there are massive differences in how each state conducts their surveys, even though they’re all using the same manual.

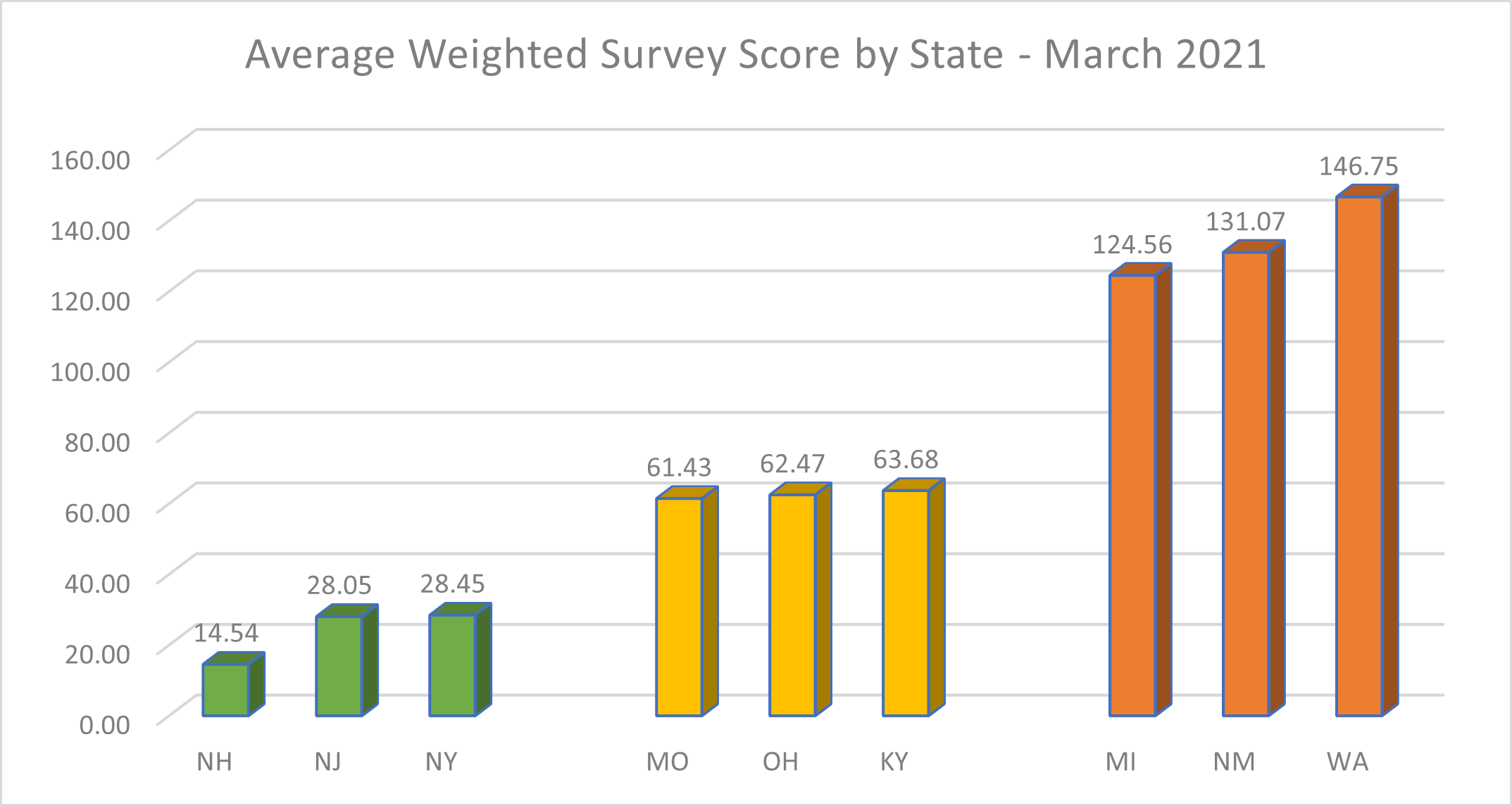

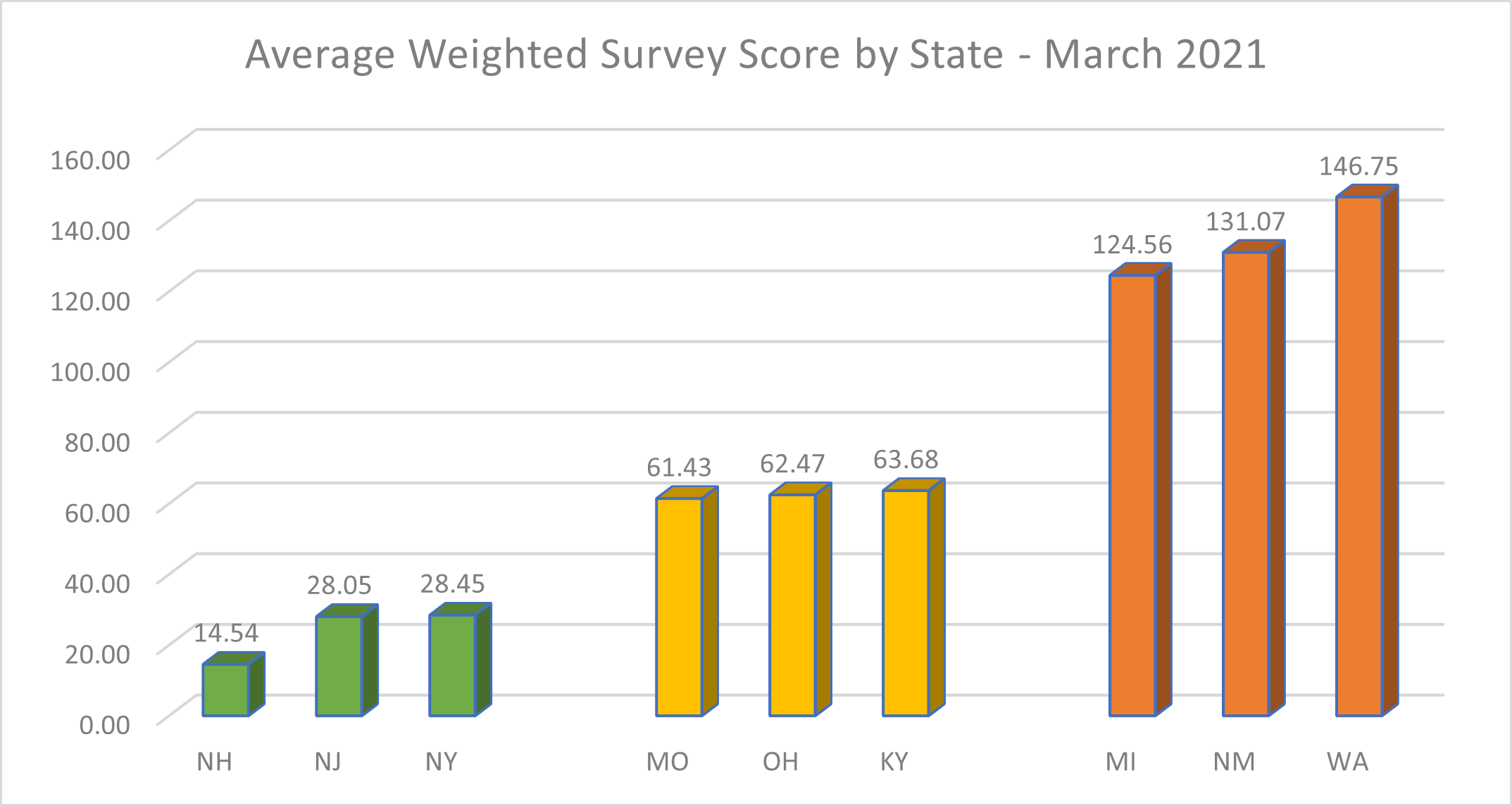

The above chart shows the top, bottom and middle 3 states in terms of average weighted survey score. Why is there such wide variation in enforcement? Why has so little changed since March 2019, when CMS ostensibly made this a priority?

Average Weighted Survey Score by State | ||||||

| Lowest | Highest | Median | Average | Standard Deviation | Range | |

| March 2019 | 10.85 (RI) | 146.18 (NM) | 54.38 (TN) | 59.87 | 28.72 | 135.32 |

| March 2021 | 14.54 (NH) | 146.75 (WA) | 62.47 (OH) | 64.37 | 28.02 | 132.21 |

| % change | 7.52% | -2.42% | -2.30% | |||

The numbers show that there’s virtually no change in the variability (in terms of standard deviation), or range between the states since then. CMS is constantly asking consumers to look beyond the Overall Star Rating and research more about each home. But for residents and families trying to choose between facilities in multiple states, things just don’t seem to make sense.

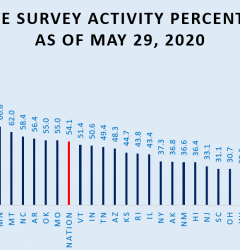

Unfortunately, CMS appears to be going in the wrong direction on this. As others have pointed out, there’s extreme variation between states in both the frequency of Focused Infection Control Surveys per facility and in the number of deficiencies found.

If shorter, more frequent Surveys are the path forward, they’ll need to be more equal, too. We’re not suggesting that CMS should force Survey to be national like the other domains, but they do need to get serious about reducing the variation between states.

Training is a smart step. Sending federal Survey experts out to work with the SSAs can help reinforce consistency, but sometimes the carrot needs to be backed up with the stick. We suggest that CMS hold audits of each SSA’s performance against the national standards and punish those not conforming by withholding a percentage of Medicaid funding. Money talks, and surveyors obviously need an incentive to get consistent.

Lost in a Maze of Cycles and Points

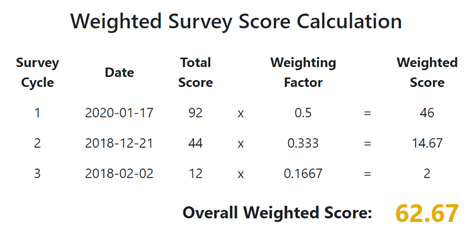

Those that do dare to dive into Survey details are rewarded with a labyrinth of detail when it comes to deficiencies and their points. First, one has to come to grips with the Survey Cycles. Understanding the weighting of the three cycles is easy enough – it makes sense to weight the more recent deficiencies more heavily:

But the cycles themselves are confusing. They’re a mashup of deficiencies found on Standard, Complaint, and Focused Infection Control Surveys. It’s good to have this variety, but they get applied to the cycles in different ways. Deficiencies from Standard Surveys stay put until a new Standard Survey is conducted, while those from Complaint or Focused Infection Control Surveys move between cycles based a rolling 12-month period.

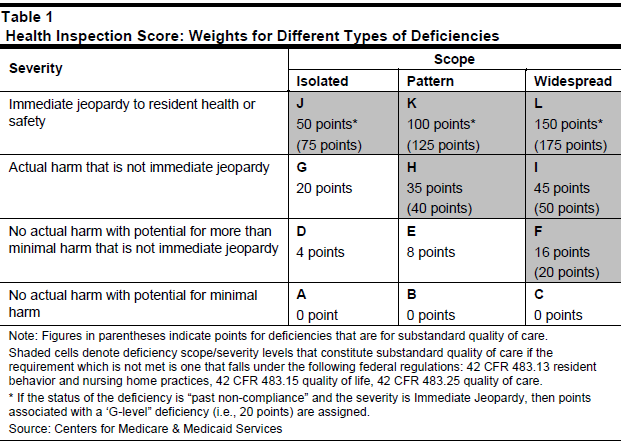

But it doesn’t stop there. When we dive into the scoring of each deficiency, we find that an advanced degree in mathematics is required to arrive at a point value for each one:

Depending on its status as “past non-compliance”, “substandard quality of care” or not, a K-level deficiency could be 20, 100 or 125 points. Confused? Add to this the fact that some deficiencies have waivers issued and are now 0 points, and it’s no surprise that even administrators are sometimes at a loss as to how they’ve been scored. Anyone hoping to understand what’s going on has to hold the above chart in one hand, the list of F-Tags in the other, and then try to match both up to a dataset that’s massive, opaque, and doesn’t even contain the number of points given for each deficiency.

We suggest that CMS dramatically simplify both the points system and how the three cycles work. The media will continue to focus on the most outrageous occurrences of abuse and neglect, but those of us trying to understand what’s going on at the rest of the nation’s homes deserve clarity on how severe a violation is, and whether or not we should be concerned.

A Clearer Tomorrow

Folks trying to find quality care deserve clarity, consistency and timely information. CMS has woven a confusing web in the Survey domain, and this moment provides a clear opportunity to untangle it all. By investing more in shorter, more frequent surveys with consistent national application and a simpler point system, they can make significant progress toward helping people make the best decision possible.

In the next installment in this series, we’ll take on the Staffing domain. To be notified about that, subscribe to our newsletter below.

Stay In Touch

Sign up for our newsletter to get the latest updates, insights and analysis from the StarPRO team